A colleague and fellow astroimager (Welford Observatory), with whom tips and tricks in the coffee lounge at work are exchanged, suggested I try PixInsight (PI) for processing some astroimages. I tried the demo version once before and I, like many others before me, found it very complicated and daunting and gave up quickly.

He pointed me towards Harry’s Astro Shed as he has posted various get you started video tutorials for PI. So I bit the bullet, paid for a licence (£lots) and got stuck in.

Yes, it’s still very complicated but with Harry’s help I also discovered it’s very powerful. The calibration routines for applying bias, dark and flat frames to the raw data are the best I’ve ever used. Move over Nebulosity 3 you’ve been outdone.

After practicing on some old images it was time to try it out on some new data using the recommended workflow.

The first thing to do was to take a new library of dark frames. They shouldn’t change from one imaging session to another so you can take them, create a master for different exposures times and they can be used over and over again. I did these on a cloudy night, a bit time consuming but the system can be left to do its thing.

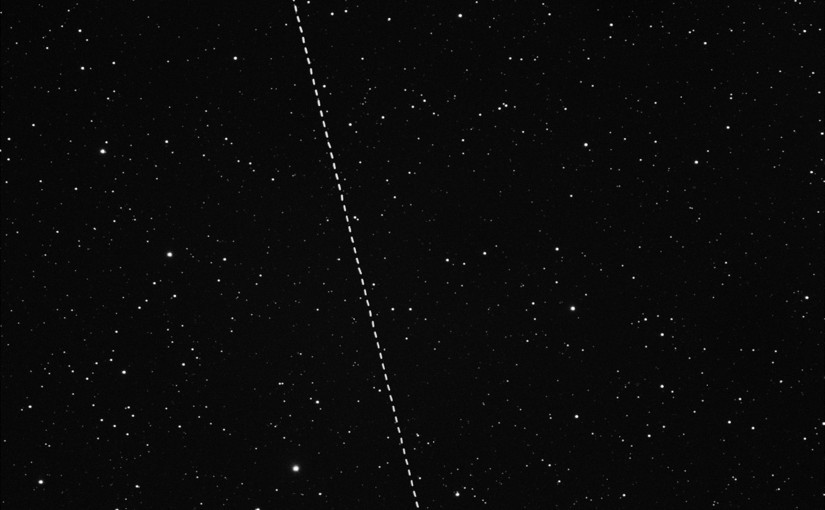

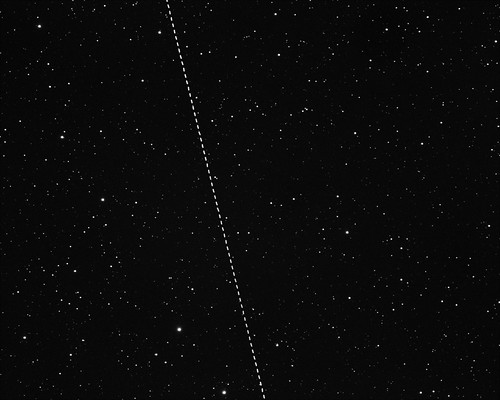

The next night was clear so I decided to have a go at the region around the Horsehead Nebula in Orion. This is a region full of nebulosity which responds well to H-alpha filters. I took 36 exposures of five minutes each, not really long enough for faint nebulosity but I wanted to get as many exposures as possible in one go as a test of PI.

I’m often a bit lazy when capturing flats but I was careful to make sure I got good ones and then calibrated, registered and integrated the images in PI. With some histogram stretching, denoise and contrast enhancement I’ve ended up with possibly the best astroimage I have ever taken.

Be sure to click through to Flickr and see the full resolution version.

I’m now a total PI convert, it’s expensive and complicated but now I’ve got a hang of the basics there’s no stopping me now!